top of page

Publications

This page presents recently published studies.

A complete list of all publications can be found here

Auditory sequence learning with degraded input: children with cochlear implants (‘nature effect’) co

Implicit sequence learning (SL) is crucial for language acquisition and has been studied in children with organic language deficits (e.g., specific language impairment). However, language delays are also seen in children with non-organic deficits, such as those with hearing loss or from low socioeconomic status (SES). While some children with cochlear implants (CI) develop strong language skills, variability in performance suggests that degraded auditory input (nature) may affect SL. Low SES children typically experience language delays due to environmental deprivation (nurture). The purpose of this study was to investigate nature versus nurture effects on auditory SL. A total of 100 participants were divided into normal hearing (NH) children, young adults, CI children from high-moderate SES, and NH children from low SES who were tested with two Serial Reaction Time (SRT) tasks with speech and environmental sounds, and with cognitive tests. Results showed SL for speech and nonspeech stimuli for all participants, suggesting that SL is resilient to degradation of auditory and language input and that SL is not specific to speech. Absolute reaction time (RT) (reflecting a combination of complex processes including SL) was found to be a sensitive measure for differentiating between groups and between types of stimuli. Specifically, normal hearing groups showed longer RT for speech compared to environmental stimuli, a prolongation that was not evident for the CI group, suggesting similar perceptual strategies applying for both sound types; and RT of Low SES children was the longest for speech stimuli compared to other groups of children, evidence of the negative impact of language deprivation on speech processing. Age was the largest contributing factor to the results (~ 50%) followed by cognitive abilities (~ 10%). Implications for intervention include speech-processing targeted programs, provided early in the critical periods of development for low SES children.

Different time courses of maturation for learning and generalization following auditory training in

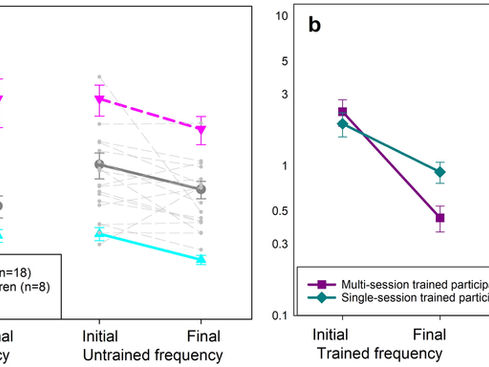

Objective: We recently demonstrated that learning abilities among school-age children vary following frequency discrimination (FD) training, with some exhibiting mature adult-like learning while others performing poorly (non-adult-like learners). This study tested the hypothesis that children’s post-training generalisation is related to their learning maturity. Additionally, it investigated how training duration influences children’s generalisation, considering the observed decrease with increased training in adults. Design: Generalisation to the untrained ear and untrained 2000 Hz frequency was assessed following single-session or nine-session 1000 Hz FD training, using an adaptive forced-choice procedure. Two additional groups served as controls for the untrained frequency. Study sample: Fifty-four children aged 7–9 years and 59 adults aged 18–30 years. Results:

(1) Only adult-like learners generalised their learning gains across frequency or ear, albeit less efficiently than adults; (2) As training duration increased children experienced reduced generalisation, similar to adults; (3) Children’s performance in the untrained tasks correlated strongly with their trained task performance after the first training session. Conclusions: Auditory skill learning and its generalisation do not necessarily mature contemporaneously, although mature learning is a prerequisite for mature generalisation. Furthermore, in children, as in adults, more practice makes rather specific experts. These findings should be considered when designing training programs.

(1) Only adult-like learners generalised their learning gains across frequency or ear, albeit less efficiently than adults; (2) As training duration increased children experienced reduced generalisation, similar to adults; (3) Children’s performance in the untrained tasks correlated strongly with their trained task performance after the first training session. Conclusions: Auditory skill learning and its generalisation do not necessarily mature contemporaneously, although mature learning is a prerequisite for mature generalisation. Furthermore, in children, as in adults, more practice makes rather specific experts. These findings should be considered when designing training programs.

Sensorimotor contingencies in congenital hearing loss: The critical first nine months

In the recent two decades it became possible to compensate severe-to-profound hearing loss using cochlear implants (CIs). The data from implanted children demonstrate that hearing and language acquisition is well-possible within an early critical period of 3 years, however, the earlier the access to sound is provided, the better outcomes can be expected. While the clinical priority is providing deaf and hard of hearing children with access to spoken language through hearing aids and CIs as early as possible, for most deaf children this access is currently in the second or third year of life. We review the findings on neural development during the first year of life, including language development, multimodal interactions between sensory and motor systems, as well as brain connectivity. Some irreversible consequences of early auditory deprivation within the first year are exacerbated when the auditory system is not developed in synchrony with the other sensory and motor systems, incorporating hearing into other sensory and motor representation. The key role of the motor system (sensorimotor contingencies) in development of sensory representations is discussed. We propose that the first year includes critical developmental steps that should be exploited to provide the framework for optimal functional connectivity of language and cognitive networks.

Speech recognition in noise across the life span with cognition and hearing sensitivity as mediator

Speech perception in noise relies on both sensory and cognitive systems and is known to decline with age. However, little is known about when this decline begins and how hearing sensitivity and cognition contribute across the lifespan. This study examined 357 Hebrew-speaking participants aged 7–90 years with clinically normal or age-appropriate hearing, using adaptive sentence-in-noise testing alongside measures of auditory working memory and processing speed. Results revealed a U-shaped pattern of speech reception thresholds in noise (SRTn), with peak performance in the late 30s. Polynomial modeling showed that hearing sensitivity (PTA4) begins to deteriorate around age 17 years, while cognitive abilities declined later—between ages 32–38 years (depending on the task)—coinciding with the inflection point in speech perception. Structural equation modeling (SEM) confirmed that cognitive and auditory factors mediate the relationship between age and SRTn, explaining 61% of the variance. These findings extend the interpretation of the Ease of Language Understanding (ELU) model to a broad age range of clinically normal and age-appropriate hearing individuals and contribute a developmental perspective by demonstrating how age-related changes in cognition reshape speech perception strategies even in the absence of peripheral hearing loss. Implications include the need for cognitive-informed approaches in audiological research and intervention throughout the lifespan.

The Effect of Noise on the Utilization of Fundamental Frequency and Formants for Voice Discriminatio

The ability to discriminate between talkers based on their fundamental (F0) and formant frequencies can facilitate speech comprehension in multi-talker environments. To date, voice discrimination (VD) of children and adults has only been tested in quiet conditions. This study examines the effect of speech-shaped noise on the use of F0 only, formants only, and the combined F0 + formant cues for VD. A total of 24 adults (18–35 years) and 16 children (7–10 years) underwent VD threshold assessments in quiet and noisy environments with the tested cues. Thresholds were obtained using a three-interval, three-alternative, two-down, one-up adaptive procedure. The results demonstrated that noise negatively impacted the utilization of formants for VD. Consequently, F0 became the lead cue for VD for the adults in noisy environments, whereas the formants were the more accessible cue for VD in quiet environments. For children, however, both cues were poorly utilized in noisy environments. The finding that robust cues such as formants are not readily available for VD in noisy conditions has significant clinical implications. Specifically, the reliance on F0 in noisy environments highlights the difficulties that children encounter in multi-talker environments due to their poor F0 discrimination and emphasizes the importance of maintaining F0 cues in speech-processing strategies tailored for hearing devices.

The validity of LENA technology for assessing the linguistic environment and interactions of infants

The present study assessed LENA’s suitability as a tool for monitoring future language interventions by evaluating its reliability, construct validity, and criterion validity in infants learning Hebrew and Arabic, across low and high levels of maternal education. Participants were 32 infants aged 3 to 11 months (16 in each language) and their mothers, whose socioeconomic status (SES) was determined based on their years of education (H-high or L-low ME-maternal education). The results showed (1) good reliability for the LENA’s automatic count on adult word count (AWC), conversational turns (CTC), and infant vocalizations (CVC), based on the positive associations and fair to excellent agreement between the manual and automatic counts; (2) good construct validity based on significantly higher counts for HME vs. LME and positive associations between LENA’s automatic vocal assessment (AVA) and developmental questionnaire (DA) and age; and (3) good concurrent criterion validity based on the positive associations between the LENA counts for CTC, CVC, AVA, and DA and the scores on the preverbal parent questionnaire (PRISE). The present study supports the use of LENA in early intervention programs for infants whose families speak Hebrew or Arabic. The LENA could be used to monitor the efficacy of these programs as well as to provide feedback to parents on the amount of language experience their infants are getting and their progress in vocal production. The results also indicate a potential utility of LENA in assessing linguistic environments and interactions in Hebrew- and Arabic-speaking infants with developmental disorders, such as hearing impairment and cerebral palsy.

bottom of page